Not to jump on any bandwagons, but this situation is getting now to ridiculous levels. Microsoft made a Microsoft account to be implicit when installing Windows 11. I ran into the situation of having to setup an account without internet access which required having to take a crash course in Shift+F10 followed by running oobe\bypassnro to enable the possibility to create a local user account during Windows 11 installation.

This is a function of time. Microsoft is now planning to get rid of oobe\bypassnro:

We’re removing the bypassnro.cmd script from the build to enhance security and user experience of Windows 11. This change ensures that all users exit setup with internet connectivity and a Microsoft Account.

Ah yes, enhancing security by making it more difficult to run:

@echo off

reg add HKLM\SOFTWARE\Microsoft\Windows\CurrentVersion\OOBE /v BypassNRO /t REG_DWORD /d 1 /f

shutdown /r /t 0Here’s a new idea: stop robbing people’s choice of deciding which account type to use.

I’m not being a hater here. I am not being even that mad about the change. But what I find utterly infuriating is that Microsoft is now pushing for a product that’s broken by design. Up until yesterday this very machine I am using to type this article has been using a Microsoft account exclusively.

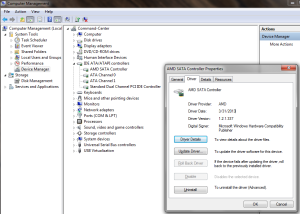

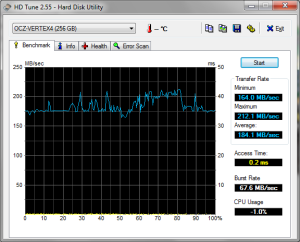

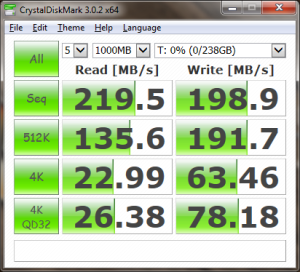

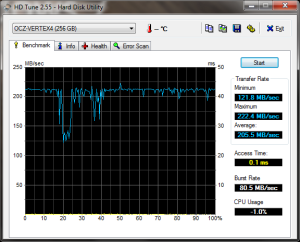

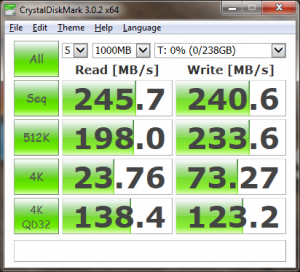

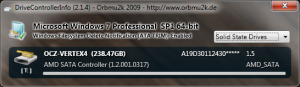

Which takes us to the unintended consequences of pushing this as default. I need to boot into safe mode to clear my video driver as the simple uninstall procedure doesn’t do a good enough job and I needed to run DDU. Basically, the AMD Radeon Pro used by my iGPU doesn’t support VRR (FreeSync) and the AMD Adrenalin driver does support VRR despite installing a “PRO Edition” version of the software. The only difference is that it comes with the default red AMD logo rather than the blue logo used by the Pro version of this driver and, of course, the much desired VRR. It’s not like I can swap my laptop screen to get a different set of features.

Because entering safe mode is a bit of an annoyance with BitLocker enabled and it requires rebooting twice, I simply ran msconfig to enable booting into safe mode via the bootloader config. Here comes the kicker: booting into minimal safe mode actually breaks Windows Hello, so my flashy Microsoft account can’t be used as no authentication method works anymore, whether this is biometrics or PIN (which for all intents and purposes may be a proper password). Great. I got stuck into a login screen that doesn’t work even when attempting to change the boot mode into safe mode with networking in a desperate attempt to see whether the flashing “Microsoft account” does anything. Narrator note: it doesn’t.

This was followed by about an hour of yelling at a screen because the way this default option is implemented is utterly broken. I tried the suggested recovery options: ran the boot trouble-shooter, done a system restore, tried to add a local user via recovery command prompt. None of these worked. What did eventually work is to edit the bootloader via the recovery command prompt:

bcdedit /deletevalue {default} safebootThis has finally undone the change made by msconfig. But the story doesn’t end here as creating a local account via the standard GUI got to even more ridiculous levels of anti-user behaviour. So much so that I had to view a YouTube video just to understand what I have been missing.

See, by default when clicking the “Add account” button it doesn’t try to add an account, but it offers an authentication window for a Microsoft account. So the workaround is to click the completely unintuitive link named “I don’t have this person’s sign-in information” which then spins up another screen where “Add a user without a Microsoft account” option is finally available. Which is still rather annoying as it forces the choice of no less than three security questions.

Or, cut out the nonsense and use the CLI:

net user USERNAME PASSWORD /addAs soon as I added a new local user and I anointed that local user as administrator, msconfig & friends came back to life and I could carry out the originally intended work without drama as I had a machine that can actually boot into safe mode and I can authenticate in safe mode into an administrator account.

Do better, Microsoft.